MeesterDaan (talk | contribs) (→Japanese Kanji Characters) |

MeesterDaan (talk | contribs) (→Kanjis & Small-Worlds) |

||

| Line 26: | Line 26: | ||

|- | |- | ||

|valign="top"| | |valign="top"| | ||

| − | The network of connected Kanji turned out to be a small-world network, which means it has a '''high clustering coefficient''', a '''low average path length''' and a '''low connection density''' (explained in detail below). As we found out soon enough, computational linguists had already found a large number of small-worlds on various levels | + | The network of connected Kanji turned out to be a ''small-world network'', which means it has a '''high clustering coefficient''', a '''low average path length''' and a '''low connection density''' (explained in detail below). As we found out soon enough, computational linguists had already found a large number of small-worlds in different languages on various levels, but this network of Kanji sharing components was not yet one of them. As such, it neatly lined up with existing research in quantitative linguistics and was accepted for publication at IARIA's Data Analytics '17 conference. |

| − | Remarkably enough, small-worlds are also found in brains, social networks, software architecture and power grids. This is somewhat counterintuitive, because randomly wiring up a network almost never leads to small-worlds. So why ''do'' all these these networks share these characteristics if their likelihood is so small? Maybe because they are subject to the same forces of formation. Some experimental models | + | Remarkably enough, small-worlds are also found in brains, social networks, software architecture and power grids. This is somewhat counterintuitive, because randomly wiring up a network almost never leads to small-worlds. So why ''do'' all these these networks share these characteristics if their likelihood is so small? Maybe because they are subject to the same forces of formation. Some experimental models for artificial brains tend to build clusters and shortcuts while synchronizing activity between artificial neurons - by themselves. This kind of behaviour is called ''self-organization'', and the resulting properties are sometimes referred to as ''emergent''. But why would brains and languages self-organize to similar structures? Could it be that communication between two humans is in some very basic way similar to communication between two brain cells? |

Revision as of 01:36, 23 December 2017

Contents

Paper

I'm still working on this page, but our paper is here. I (Daan van den Berg) welcome all feedback you might have. Look me up in the UvA-directory, on LinkedIn or FaceBook.

Japanese Kanji Characters

|

The whole idea was quite simple actually, and born from the language enthousiasm of three programmers. Japanese is not an easy language of choice, Japanese sports a 60,000-piece character set named Kanji. Although you 'only' need to learn about 2,000 though to read the language, this is still a formidable exercise. There is some systemacity though; many Kanji are comprised of one or more of 252 components. Individual Kanji are said to carry meaning in a word-like manner, and akin to how compound words are built up ("swordfish", "snowball", "fireplace"), kanji often derive meaning from their combined constituent components, and are thus explained as such in textbooks for Japanese children and foreign students of the language.

|

Kanjis & Small-Worlds

|

The network of connected Kanji turned out to be a small-world network, which means it has a high clustering coefficient, a low average path length and a low connection density (explained in detail below). As we found out soon enough, computational linguists had already found a large number of small-worlds in different languages on various levels, but this network of Kanji sharing components was not yet one of them. As such, it neatly lined up with existing research in quantitative linguistics and was accepted for publication at IARIA's Data Analytics '17 conference.

|

The functional connectivity graph of the brain is one of many well known small-worlds See Sporns & Honey (2006) and others. Image adapted from Sporns' book "Discovering the Human Connectome" |

Clustering Coefficient & Average Path Length (optional)

|

First let's have a brief look at what makes a small-world network a small-world network, it's not all that difficult. First of all: it's about sparse networks, that is, networks with relatively low edge densities. Small-World networks have a high clustering coefficient. The clustering coefficient on a vertex is the fraction of connections between its neighbours. Vertex C has four neighbours. Between these four we have three edges, out of a possible six, so the clustering coefficient on C is 0.5. Similarly, the clustering coefficient on B is 1, and on F it is 0.33 if we disregard the two neighbours it must have outside the picture. If we don't disregard those, it has five neighbours with either one or two connections between them, so the clustering coefficient on F would be either 0.1 or 0.2. The cluster coefficient of the network is simply the average of all its vertices.

|

Gelb's Hypothesis: from pictures to sounds

|

So many language networks have small-world properties. But for Japanese, there's a little more to it. Because as it turns out, there's an old conjecture by Igancy Jay Gelb (1907-1985) and it appears to coincide with our findings quite neatly. An American linguist from Polish descent, Gelb hypothesized that all written languages go through a phase transition from being picture-based to being sound-based. Studied deeply, this idea is quite coarse and the exact trajectory might differ from language to language, but the core concept remains quite alluring, especially where it concerns Japanese Kanji.

|

Ignace Jay Gelb (1907-1985), and his hypothesis as visualized by Tadao Miyamoto in his 2007-paper. Note how these initially quite pictorial characters in this Cuneiform script evolve to a set of characters built up from relatively repetitive components. |

Kanjis correspond to sounds too

|

So it seems that succesive disappearance of visual features accounts for the clustering found in the network of Kanji. In other words: Kanji have become simpler through time. They are nowhere near the elaborate pictures they were around 2,000 years ago, but in many ways symbolic abstractions of their ancient precursors.

|

Kanji evolution through time. Notice how visual similarity has increased, especially between 'horse' and 'fish'. Adapted from www.tofugu.com |

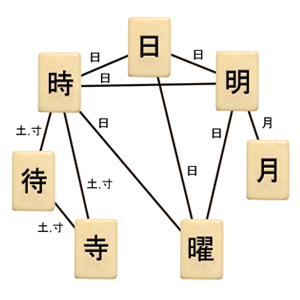

These seven characters all share a visual component, and a pronunciation ("rei"). Although Kanji meaning are often explained through combined components, this explanation looks unconvincing in some cases. Do components really add an element of meaning to a Kanji? Or is it actualy an element of sound? |

Whoswho&where

|

More

Maybe later.